Oh the drama! Some of the current hyperventilating in the alternative nutrition community–sugar is toxic, insulin is evil, vegetable oils give you cancer, and running will kill you–has, much to my dismay, made the alternative nutrition community sound as shrill and crazed as the mainstream nutrition one.

When you have self-appointed nutrition experts food writers like Mark Bittman agreeing feverishly with a pediatric endocrinologist with years of clinical experience like Robert Lustig, we’ve crossed over into some weird nutrition Twilight Zone where fact, fantasy, and hype all swirl together in one giant twitter feed of incoherence meant, I think, to send us into a dark corner where we can do nothing but nibble on organic kale, mumble incoherently about inflammation and phytates, and await the zombie apocalypse.

No, carbohydrates are not evil—that’s right, not even sugar. If sugar were rat poison, one trip to the county fair in 4th grade would have killed me with a cotton candy overdose. Neither is insulin, now characterized as the serial killer of hormones (try explaining that to a person with type 1 diabetes).

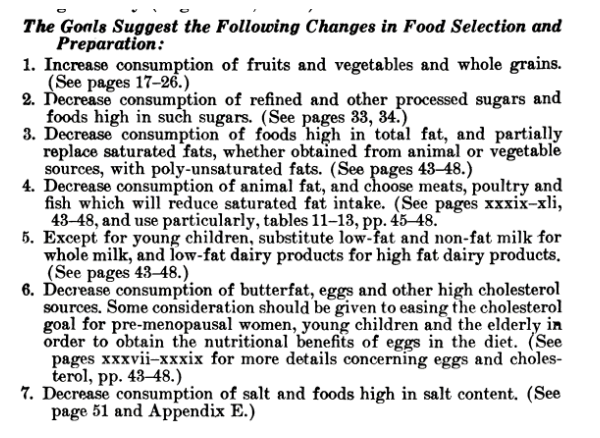

But that doesn’t mean that 35 years of dietary advice to increase our grain and cereal consumption, while decreasing our fat and saturated fat consumption has been a good idea.

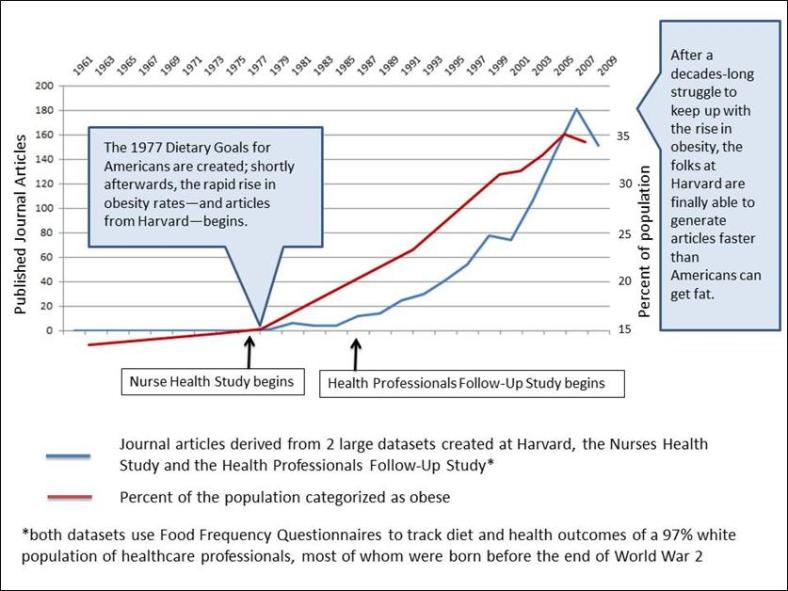

I have gotten rather tired of seeing this graph used as a central rationale for arguing that the changes in total carbohydrate intake over the past 30 years have not contributed to the rising rates of obesity.

The argument takes shapes on 2 fronts:

1) We ate 500 grams of carbohydrate per day in 1909 and 500 grams in 1997 and WE WEREN’T FAT IN 1909!

2) The other part of the argument is that the TYPE of carbohydrate has shifted over time. In 1909, we ate healthy, fiber-filled unrefined and unprocessed types of carbohydrates. Not like now.

Okay, let’s take closer look at that paper, shall we? And then let’s look at what really matters: the context.

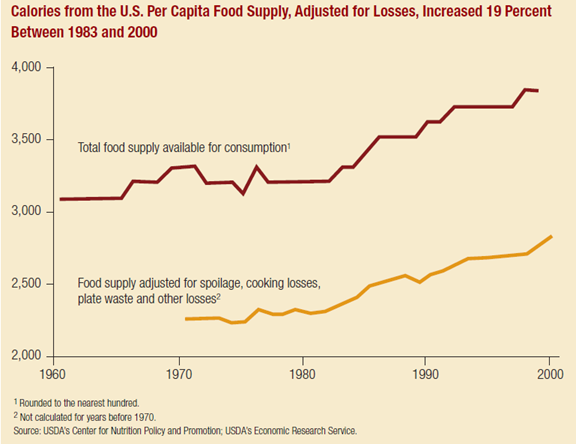

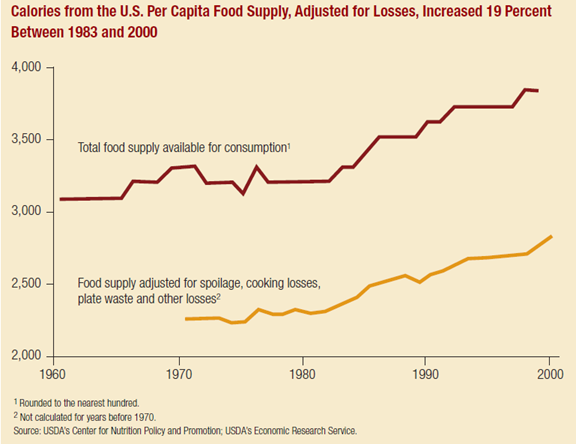

The data used to make this graph are not consumption data, but food availability data. This is problematic in that it tells us how much of a nutrient was available in the food supply in any given year, but does not account for food waste, spoilage, and other losses. And in America, we currently waste a lot of food.

According to the USDA, we currently lose over 1000 calories in our food supply–calories that don’t make it into our mouths. Did we waste the same percentage of our food supply across the entire century? Truth is, we don’t know and we are not likely to find out—but I seriously doubt it. My mother and both my grandmothers—with memories of war and rationing fresh in their minds—would be no more likely to throw out anything remotely edible as they would be to do the Macarena. My mother has been known to put random bits of leftover food in soups, sloppy joes, and—famously—pancake batter. To this day, should your hand begin to move toward the compost bucket with a tablespoon of mashed potatoes scraped from the plate of a grandchild shedding cold virus like it was last week’s fashion, she will throw herself in front of the bucket and shriek, “NOOOOOO! Don’t throw that OUT! I’ll have that for lunch tomorrow.”

You know what this means folks: in 1909, we were likely eating MORE carbohydrate than we are today. (Or maybe in 1909, all those steelworkers pulling 12 hour days 7 days a week, just tossed out their sandwich crusts rather than eat them. It could happen.)

BUT–as with butts all over America including mine, it’s a really Big BUT: How do I explain the fact that Americans were eating GIANT STEAMING HEAPS OF CARBOHYDRATES back in 1909—and yet, and yet—they were NOT FAT!!??!!

Okay. Y’know. I’m up for this one. Not only is problematic to the point of absurdity to compare food availability data from the early 1900s to our current food system, life in general was a little different back then. At the turn of the century,

- average life expectancy was around 50

- the nation had 8,000 cars

- and about 10 miles of paved roads.

In 1909, neither assembly lines nor the Titanic had happened yet.

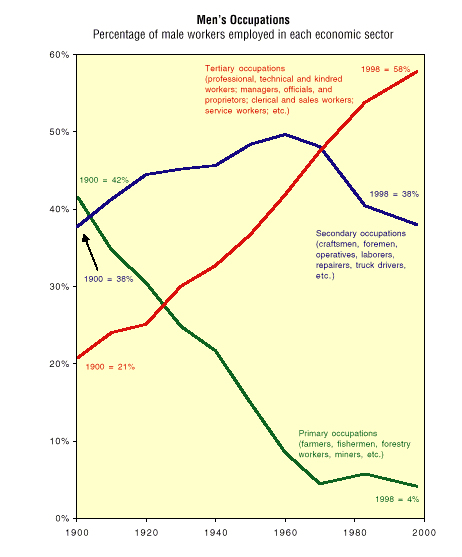

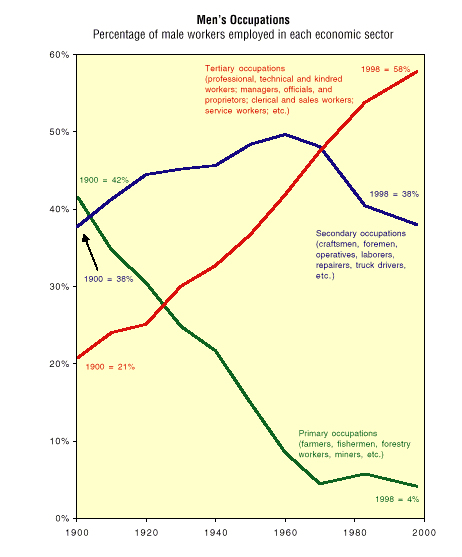

The labor force looked a little different too:

Primary occupations made up the largest percentage of male workers (42%)—farmers, fisherman, miners, etc.—what we would now call manual laborers. Another 21% were “blue collar” jobs, craftsmen, machine operators, and laborers whose activities in those early days of the Industrial Revolution, before many things became mechanized, must have required a considerable amount of energy. And not only was the work hard, there was a lot of it. At the turn of the century, the average workweek was 59 hours, or close to 6 10-hour days. And it wasn’t just men working. As our country shifted from a rural agrarian economy to a more urban industrialized one, women and children worked both on the farms and in the factories.

This is what is called “context.”

In the past, nutrition epidemiologists have always considered caloric intake to be a surrogate marker for activity level. To quote Walter Willett himself:

“Indeed, in most instances total energy intake can be interpreted as a crude measure of physical activity . . . ” (in: Willett, Walter. Nutritional Epidemiology. Oxford University Press, 1998, p. 276).

It makes perfect sense that Americans would have a lot of carbohydrate and calories in their food supply in 1909. Carbohydrates have been—and still are—a cheap source of energy to fuel the working masses. But it makes little sense to compare the carbohydrate intake of the labor force of 1909 to the labor force of 1997, as in the graph at the beginning of this post (remember the beginning of this post?).

After decades of decline, carbohydrate availability experienced a little upturn from the mid 1960s to the late 1970s, when it began to climb rapidly. But generally speaking, carbohydrate intake was lower during that time than at any point previously.

I’m not crazy about food availability data, but to be consistent with the graph at the top of the page, here it is.

Data based on per capita quantities of food available for consumption:

|

1909 |

1975 |

Change |

| Total calories |

3500 |

3100 |

-400 |

| Carbohydrate calories |

2008 |

1592 |

-416 |

| Protein calories |

404 |

372 |

-32 |

| Total fat calories |

1098 |

1260 |

+162 |

|

|

|

|

| Saturated fat (grams) |

52 |

47 |

-5 |

| Mono- and polyunsaturated fat (grams) |

540 |

738 |

+198 |

| Fiber (grams) |

29 |

20 |

-9 |

To me, it looks pretty much like it should with regard to context. As our country went from pre- and early industrialized conditions to a fully-industrialized country of suburbs and station wagons, we were less active in 1970 than we were in 1909, so we consumed fewer calories. The calories we gave up were ones from the cheap sources of energy—carbohydrates—that would have been most readily available in the economy of a still-developing nation. Instead, we ate more fat.

We can’t separate out “added fats” from “naturally-present fats” from this data, but if we use saturated fat vs. mono- and polyunsaturated fats as proxies for animal fats vs. vegetable oils (yes, I know that animal fats have lots of mono- and polyunsaturated fats, but alas, such are the limitations of the dataset), then it looks like Americans were making use of the soybean oil that was beginning to be manufactured in abundance during the 1950s and 1960s and was making its way into our food supply. (During this time, heart disease mortality was decreasing, an effect likely due more to warnings about the hazards of smoking, which began in earnest in 1964, than to dietary changes; although availability of unsaturated fats went up, that of saturated fats did not really go down.)

As for all those “healthy” carbohydrates that we were eating before we started getting fat? Using fiber as a proxy for level of “refinement” (as in the graph at the beginning of this post—remember the beginning of this post?), we seemed to be eating more refined carbohydrates in 1975 than in 1909—and yet, the obesity crisis was still yet a gleam in Walter Willett’s eyes.

While our lives in 1909 differed greatly from our current environment, our lives in the 1970s were not all that much different than they are now. I remember. As much as it pains me to confess this, I was there. I wore bell bottoms. I had a bike with a banana seat (used primarily for trips to the candy store to buy Pixie Straws). I did macramé. My parents had desk jobs, as did most adults I knew. No adult I knew “exercised” until we got new neighbors next door. I remember the first time our new next-door neighbor jogged around the block. My brothers and sister and I plastered our faces to the picture window in the living room to scream with excitement every time she ran by; it was no less bizarre than watching a bear ride a unicycle.

In 1970, more men had white-collar than blue-collar jobs; jobs that primarily consisted of manual labor had reached their nadir. Children were largely excluded from the labor force, and women, like men, had moved from farm and factory jobs to more white (or pink) collar work. The data on this is not great (in the 1970s, we hadn’t gotten that excited about exercise yet) but our best approximation is that about 35% of adults–one of whom was my neighbor–exercised regularly, with “regularly” defined as “20 minutes at least 3 days a week” of moderately intense exercise. (Compare this definition, a total of 60 minutes a week, to the current recommendation, more than double that amount, of 150 minutes a week.)

Not too long ago, the 2000 Dietary Guidelines Advisory Committee (DGAC) recognized that environmental context—such as the difference between America in 1909 and America in 1970—might lead to or warrant dietary differences:

“There has been a long-standing belief among experts in nutrition that low-fat diets are most conducive to overall health. This belief is based on epidemiological evidence that countries in which very low fat diets are consumed have a relatively low prevalence of coronary heart disease, obesity, and some forms of cancer. For example, low rates of coronary heart disease have been observed in parts of the Far East where intakes of fat traditionally have been very low. However, populations in these countries tend to be rural, consume a limited variety of food, and have a high energy expenditure from manual labor. Therefore, the specific contribution of low-fat diets to low rates of chronic disease remains uncertain. Particularly germane is the question of whether a low-fat diet would benefit the American population, which is largely urban and sedentary and has a wide choice of foods.” [emphasis mine – although whether our population in 2000 was largely “sedentary” is arguable]

The 2000 DGAC goes on to say:

“The metabolic changes that accompany a marked reduction in fat intake could predispose to coronary heart disease and type 2 diabetes mellitus. For example, reducing the percentage of dietary fat to 20 percent of calories can induce a serum lipoprotein pattern called atherogenic dyslipidemia, which is characterized by elevated triglycerides, small-dense LDL, and low high-density lipoproteins (HDL). This lipoprotein pattern apparently predisposes to coronary heart disease. This blood lipid response to a high-carbohydrate diet was observed earlier and has been confirmed repeatedly. Consumption of high-carbohydrate diets also can produce an enhanced post-prandial response in glucose and insulin concentrations. In persons with insulin resistance, this response could predispose to type 2 diabetes mellitus.

The committee further held the concern that the previous priority given to a “low-fat intake” may lead people to believe that, as long as fat intake is low, the diet will be entirely healthful. This belief could engender an overconsumption of total calories in the form of carbohydrate, resulting in the adverse metabolic consequences of high carbohydrate diets. Further, the possibility that overconsumption of carbohydrate may contribute to obesity cannot be ignored. The committee noted reports that an increasing prevalence of obesity in the United States has corresponded roughly with an absolute increase in carbohydrate consumption.” [emphasis mine]

Hmmmm. Okay, folks, that was in 2000—THIRTEEN years ago. If the DGAC was concerned about increases in carbohydrate intake—absolute carbohydrate intake, not just sugars, but sugars and starches—13 years ago, how come nothing has changed in our federal nutrition policy since then?

I’m not going to blame you if your eyes glaze over during this next part, as I get down and geeky on you with some Dietary Guidelines backstory:

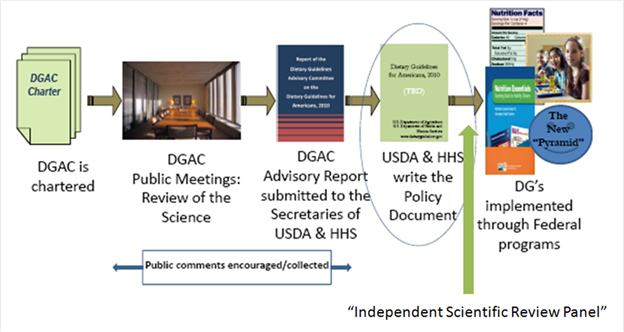

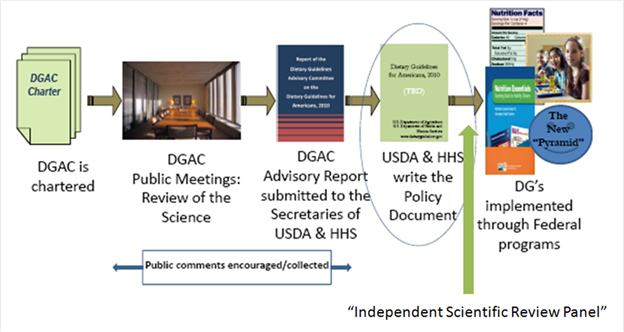

As with all versions of the Dietary Guidelines after 1980, the 2000 edition was based on a report submitted by the DGAC which indicated what changes should be made from the previous version of the Guidelines. And, as will all previous versions after 1980, the changes in the 2000 Dietary Guidelines were taken almost word-for-word from the suggestions given by the scientists on the DGAC, with few changes made by USDA or HHS staff. Although HHS and USDA took turns administrating the creation of the Guidelines, in 2000, no staff members from either agency were indicated as contributing to the writing of the final Guidelines.

But after those comments in 2000 about carbohydrates, things changed.

Beginning with the 2005 Dietary Guidelines, HHS and USDA staff members are in charge of writing the Guidelines, which are no longer considered to be a scientific document whose audience is the American public, but a policy document whose audience is nutrition educators, health professionals, and policymakers. Why and under whose direction this change took place is unknown.

The Dietary Guidelines process doesn’t have a lot of law holding it up. Most of what happens in regard to the Guidelines is a matter of bureaucracy, decision-making that takes place within USDA and HHS that is not handled by elected representatives but by government employees.

However, there is one mandate of importance: the National Nutrition Monitoring and Related Research Act of 1990, Public Law 445, 101st Cong., 2nd sess. (October 22, 1990), section 301. (P.L. 101-445) requires that “The information and guidelines contained in each report required under paragraph shall be based on the preponderance of the scientific and medical knowledge which is current at the time the report is prepared.”

The 2000 Dietary Guidelines were (at least theoretically) scientifically accurate because scientists were writing them. But beginning in 2005, the Dietary Guidelines document recognizes the contributions of an “Independent Scientific Review Panel who peer reviewed the recommendations of the document to ensure they were based on a preponderance of scientific evidence.” [To read the whole sordid story of the “Independent Scientific Review Panel,” which appears to neither be “independent” nor to “peer-review” the Guidelines, check out Healthy Nation Coalition’s Freedom of Information Act results.] Long story short: we don’t know who–if anyone–is making sure the Guidelines are based on a complete and current review of the science.

Did HHS and USDA not like the direction that it looked like the Guidelines were going to take–with all that crazy talk about too many carbohydrates – and therefore made sure the scientists on the DGAC were farther removed from the process of creating them?

Hmmmmm again.

Dr. Janet King, chairwoman of the 2005 DGAC had this to say, after her tenure creating the Guidelines was over: “Evidence has begun to accumulate suggesting that a lower intake of carbohydrate may be better for cardiovascular health.”

Dr. Joanne Slavin, a member of the 2010 DGAC had this to say, after her tenure creating the Guidelines was over: “I believe fat needs to go higher and carbs need to go down,” and “It is overall carbohydrate, not just sugar. Just to take sugar out is not going to have any impact on public health.”

It looks like, at least in 2005 and 2010, some well-respected scientists (respected well enough to make it onto the DGAC) thought that—in the context of our current environment—maybe our continuing advice to Americans to eat more carbohydrate and less fat wasn’t such a good idea.

I think it is at about this point that I begin to hear the wailing and gnashing of teeth of those who don’t think Americans ever followed this advice to begin with, because—goodness knows—if we had, we wouldn’t be so darn FAT!

So did Americans follow the advice handed out in those early dietary recommendations? Or did Solid Fats and Added Sugars (SoFAS—as the USDA/HHS like to call them—as in “get up offa yur SoFAS and work your fatty acids off”) made us the giant tubs of lard that we are just as the USDA/HHS says they did?

Stay tuned for the next episode of As the Calories Churn, when I attempt to settle those questions once and for all. And you’ll hear a big yellow blob with stick legs named Timer say, “I hanker for a hunk of–a slab or slice or chunk of–I hanker for a hunk of cheese!”

In an auditorium full of really smart people, I cannot have been the only person thinking that W and his data looked a little over-exposed. But–as we saw with the circumstances revealed by the Ramsden and Zamora paper last week–it can be hard to contradict a famous colleague, and in nutritional epidemiology, no one is famouser than W. It may be even harder, I suppose, when he is an invited guest and an apparently nice fellow. The Q&A was respectful and polite. Difficult as it is to believe, even I kept my mouth shut.

In an auditorium full of really smart people, I cannot have been the only person thinking that W and his data looked a little over-exposed. But–as we saw with the circumstances revealed by the Ramsden and Zamora paper last week–it can be hard to contradict a famous colleague, and in nutritional epidemiology, no one is famouser than W. It may be even harder, I suppose, when he is an invited guest and an apparently nice fellow. The Q&A was respectful and polite. Difficult as it is to believe, even I kept my mouth shut.