Last week, the Dietary Guidelines Advisory Committee released the report containing its recommendations for the 2015 Dietary Guidelines for Americans. The report is 572 pages long, more than 100 pages longer than the last report, released 5 years ago. Longer than one of my blog posts, even. Despite its length and the tortured governmentalese in which it is written, its message is pretty clear and simple. So for those of you who would like to know what the report says, but don’t want to read the whole damn thing, I present, below, its essence:

Dear America,

You are sick–and fat. And it’s all your fault.

Face it. You screwed up. Somewhere in the past few decades, you started eating too much food. Too much BAD food. We don’t know why. We think it is because you are stupid.

We don’t know why you are stupid.

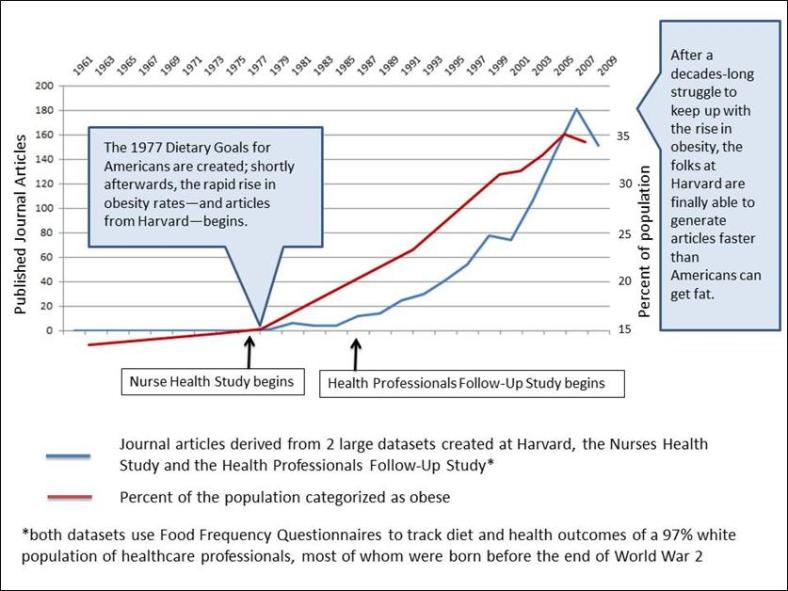

You used to be smart–at least about food–but somewhere in the late 1970s or early 1980s, you got stupid. Before then, we didn’t have to tell you what to eat. Somehow, you just knew. You ate food, and you didn’t get fat and sick.

But NOW, every five years we have to get together and rack our brains to try and figure out a way to tell you how to eat–AGAIN. Because no matter what we tell you, it doesn’t work.

The more we tell you how to eat, the worse your eating habits get. And the worse your eating habits get, the fatter and sicker you are. And the fatter and sicker you are, the more we have to tell you how to eat.

Look. You know we have no real way to measure your eating habits. Mostly because fat people lie about what they eat and most of you are now, technically speaking, fat. But we still know that your eating habits have gotten worse. How? Because you’re fat. And, y’know, sick. And the only real reason people get fat and sick is because they have poor eating habits. That much we do know for sure.

And because, for decades now, we have been telling you exactly what to eat so you don’t get fat and sick, we also know the only real reason people have poor eating habits is because they are stupid. So you must be stupid.

Let’s make this as clear as possible for you:

And though it makes our hearts heavy to say this, unfortunately, and through no fault of their own, people who don’t have much money are particularly stupid. We know this because they are sicker than people who have money. Of course, money has nothing to do with whether or not you are sick. It’s the food, stupid.

We’ll admit that some of the responsibility for this rests on our shoulders. When we started out telling you how to eat, we didn’t realize how stupid you were. That was our fault.

In 1977, a bunch of us got together to figure out how to make sure you would not get fat and sick. You weren’t fat and sick at the time, so we knew you needed our help.

We told you to eat more carbohydrates–a.k.a., sugars and starches–and less sugar. How simple is that? But could you follow this advice? Nooooooo. You’re too stupid.

We told you to eat food with less fat. We meant for you to buy a copy of the Moosewood Cookbook and eat kale and lentils and quinoa. But no, you were too stupid for that too. Instead, you started eating PRODUCTS that said “low-fat” and “fat-free.” What were you thinking?

We told you to eat less animal fat. Obviously, we meant JUST DON’T EAT ANIMALS. But you didn’t get it. Instead, you quit eating cows and started eating chickens. Hellooooo? Chickens are ANIMALS.

After more than three decades of us telling you how to eat, it is obvious you are too stupid to figure out how to eat. So we are here to make it perfectly clear, once and for all.

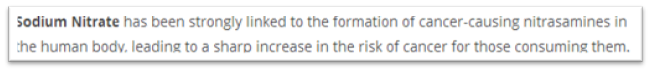

FIRST: Don’t eat food with salt in it.

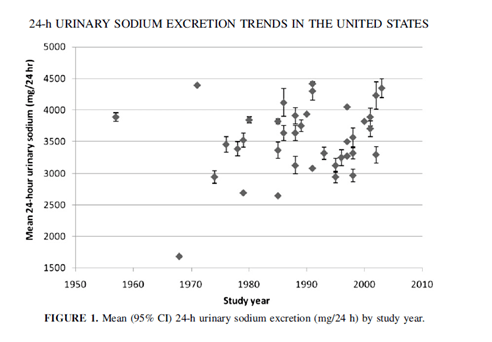

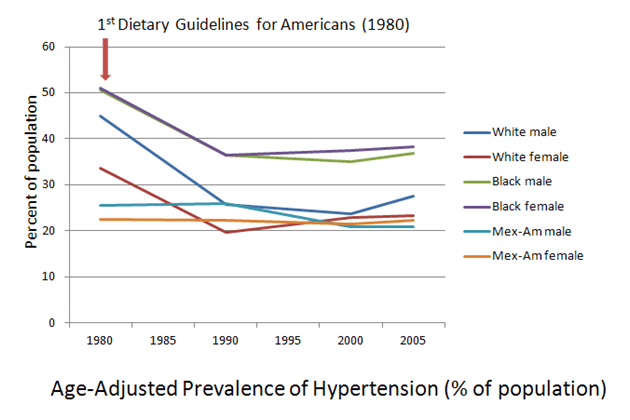

Even though food with salt in it doesn’t make you fat, it does raise your blood pressure. Maybe. Sometimes. And, yes, we know that your blood pressure has been going down for a few decades now, but it isn’t because you are eating less salt because you’re not. And it’s true that we really have no idea whether or not reducing your intake of salt prevents disease. But all of that is beside the point.

Here’s the deal: Salt makes food taste good. And when food tastes good, you eat it. We’re opposed to that. But since you are too stupid to actually stop eating food, we are going to insist that food manufacturers stop putting salt in their products. That way, their products will grow weird microorganisms and spoil rapidly–and will taste like poop.

This will force everyone to stop eating food products and get kale from the farmer’s market (NO SALT ADDED) and lentils and quinoa in bulk from the food co-op (NO SALT ADDED). Got it?

Also, we are working on ways to make salt shakers illegal.

NEXT: Don’t eat animals. At all. EVER.

We told you not to eat animals because meat has lots of fat, and fat makes you fat. Then you just started eating skinny animals. So we’re scrapping the whole fat thing. Eat all the fat you want. Just don’t eat fat from animals, because that is the same thing as eating animals, stupid.

We told you not to eat animals because meat has lots of cholesterol, and dietary cholesterol makes your blood cholesterol go up. Now our cardiologist friends who work for pharmaceutical companies and our buds over at the American Heart Association have told us that avoiding dietary cholesterol won’t actually make your blood cholesterol go down. They say: If you want your blood cholesterol to go down, take a statin. Statins, in case you are wondering, are not made from animals so you can have all you want.

Eggs? you ask. We’ve ditched the cholesterol limits, so now you think you can eat eggs? Helloooo? Eggs are just baby chickens and baby chickens are animals and you are NOT ALLOWED TO EAT ANIMALS. Geez.

Yes, we are still hanging onto that “don’t eat animals because of saturated fat” thing, but we know it can’t last forever since we can’t actually prove that saturated fat is the evil dietary villain we’ve been saying it is. So …

Here’s the deal: Eating animals doesn’t just kill animals. It kills the planet. If you keep killing animals and eating them WE ARE ALL GOING TO DIE. And it’s going to be your fault, stupid.

And especially don’t eat red meat. C’mon. Do we have to spell this out for you? RED meat?

RED meat = COMMUNIST meat. Does Vladimir Putin look like a vegan? We thought not.

If you really must eat dead rotting flesh, we think it is okay to eat dead rotting fish flesh, as long as it is from salmon raised on ecologically sustainable fish farms by friendly people with college educations.

FINALLY: Stop eating–and drinking–sugar.

Okay, we know we told you to eat more carbohydrate food. And, yes, we know sugar is a carbohydrate. But did you really think we were telling you to eat more sugar? Look, if you must have sugar, eat some starchy grains and cereals. The only difference between sugar and starch is about 15 minutes in your digestive tract. But …

Here’s the deal: Sugar makes food taste good. And when food tastes good, you eat it. Like we said, we’re opposed to that. But since you are too stupid to actually stop eating food, we are going to insist that food manufacturers stop putting sugar in their products. That way, their products will grow weird microorganisms and spoil rapidly–and will taste like poop.

This will force everyone to stop eating food products and get kale from the farmer’s market (NO SUGAR ADDED) and lentils and quinoa in bulk from the food co-op (NO SUGAR ADDED). Got it?

Hey, we know what you’re thinking. You’re thinking “Oh, I’ll just use artificial sweeteners instead of sugar.” Oh NOOOO you don’t. No sugar-filled soda. No diet soda. Water only. Capiche?

So, to spell it all out for you once and for all:

DO NOT EAT food that has salt or sugar in it, i.e. food that tastes good. Also, don’t eat animals.

DO EAT kale from your local farmers’ market, lentils and quinoa from your local food co-op, plus salmon. Drink water. That’s it.

And, since we graciously recognize the diversity of this great nation, we must remind you that you can adapt the above dietary pattern to meet your own health needs, dietary preferences, and cultural traditions. Just as long as you don’t add salt, sugar, or dead animals.

Because we have absolutely zero faith you are smart enough to follow even this simple advice, we are asking for additional research to be done on your child-raising habits (Do you let your children eat food that tastes good? BAAAAD parent!) and your sleep habits (Do you dream about cheeseburgers? We KNOW you do and that must stop! No DEAD IMAGINARY ANIMALS!)

And–because we recognize your deeply ingrained stupidity when it comes to all things food, and because we know that food is the only thing that really matters when it comes to health, we are proposing America create a national “culture of health” where healthy lifestyles are easier to achieve and normative.

“Normative” is a big fancy word that means if you eat what we tell you to eat, you are a good person and if you eat food that tastes good, you are a bad person. We will know you are a bad person because you will be sick. Or fat. Because that’s what happens to bad people who eat bad food.

We will kick-off this “culture of health” by creating an Office of Dietary Wisdom that will make the healthy choice–kale, lentils, quinoa, salmon, and water–the easy choice for all you stupid Americans. We will establish a Food Czar to run the Office of Dietary Wisdom because nothing says “America, home of freedom and democracy” like the title of a 19th-century Russian monarch.*

The primary goal of the “culture of health” will be to enforce your right to eat what we’ve determined is good for you.

This approach will combine the draconian government overreach we all love with the lack of improvements we expect, resulting in a continued demand for our services as the only people smart enough to tell the stupid people how to eat.**

Look. We know we’ve been a little unclear in the past. And we know we’ve reversed our position on a number of things. Hey, our bad. And when, five years from now, you stupid Americans are as sick and fat as ever, we may have to change up our advice again based, y’know, on whatever evidence we can find that supports the conclusions we’ve already reached.

But rest assured America.

No matter what the evidence says, we are never ever going to tell you it’s okay to eat salt, sugar, or animals. And, no matter what the evidence says, we are never ever going to tell you that it’s not okay to eat grains, cereals, or vegetable oils. And you can take that to the bank. We did.

Love and kisses,

Committee for Government Approved Information on Nutrition (Code name: G.A.I.N.)

***********************************************************************************

*Thank you, Steve Wiley.

**Thank you, Jon Stewart, for at least part of this line.