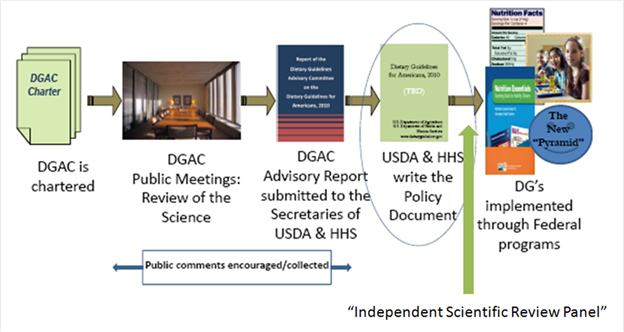

I’ve been meaning to blog about this for a while now, but as always: grad school. However, today in my inbox appeared a call to sign a petition sponsored by the Nutrition Coalition to have federal dietary guidance based on sound scientific evidence. Now, I’m all for that–assuming of course, we could agree on what we mean by “sound” and by “scientific” and by “evidence” in the area of nutrition and chronic disease relationships.

Much to my surprise, the first reform that is called for is “to let Americans know that the low-fat diet is no longer officially recommended.” This part makes sense, although it’s kind of old news (see below). The Dietary Guidelines for Americans (affectionately known as the DGA) haven’t recommended a “low-fat” diet since 1995.

But the petition goes on to say that “the DGA have quietly dropped previous limits on total fat.” So what’s my problem?

Walter Willett and Frank Hu say the limits on fat are gone.

Nina Teicholz says the limits on fat are gone.

Great minds think alike, right? Those pesky limits must be gone.

Inconveniently, the folks at the USDA and HHS say this:

An upper limit on total fat intake was not removed from the DGA.

I am less concerned about whether or not the upper limits on fat are officially gone (they aren’t), and more interested in why parties as diverse in their perspectives on diet as the dudes at Harvard and the dudettes at Nutrition Coalition would both agree that they are (when they aren’t). I mean we’re talking about folks who know more than a little about nutrition and policy, and they can’t seem to figure out what the DGA actually say.

Could it be that the USDA and HHS are attempting to rhetorically distance themselves from limits on fat without the policy implications that come with making that official? They’re willing to date the “no more low fat” thinking, but don’t want to put a ring on it?

After all, whether or not you think people followed low-fat guidance, or didn’t follow low-fat guidance, or we can’t tell one way or the other because everybody lies about what they eat anyway, using the authority of the federal government to prescribe a single dietary pattern to everyone over the age of two in the hopes of reducing the risk of every single chronic disease known to mankind–including obesity, which wasn’t even a disease until we made it one–simply did not work out the way we thought it would.

I’m not arguing here about whether or not a low-fat diet is a problem (I suspect it is for a lot of folks and not so much for others), but it seems low-fat dietary guidance is.

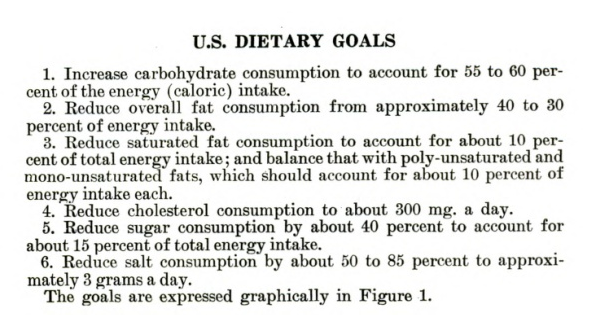

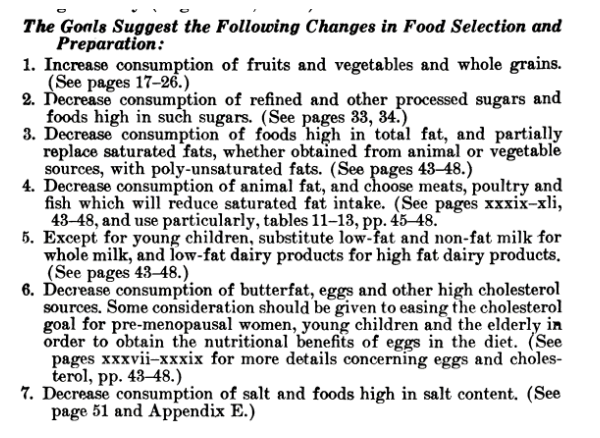

The folks who write the DGA seem to have recognized this issue 17 years ago. In 1995, the guidance on fat in the DGA said “Choose a diet low in fat,” and specifically, to limit total fat intake to 30% of calories. But then this happened:

- In 2000, the DGA said, “Choose a diet that is moderate in total fat.” But. The limit on total fat intake remained exactly the same: 30% of calories. So rhetorically, no more low-fat diet; materially, same old, same old.

- In 2005, both the “low fat” and “moderate fat” language are avoided. In the meantime, the total fat intake limit is, quietly, raised, to 35% of calories. Maybe they thought if they shifted the limit on total fat without actually saying anything about it, no one would notice. And, it seems, pretty much no one did.

- In 2010, the range for fat intake remains the same as in 2005: 20-35% of calories.

- In 2015, nothing changes. The range for fat intake is between 20-35% of calories for adults, 25-35% for people younger than 18 years old.

In other words, it does look like the DGA have slowly been modifying–without completely relinquishing–their call for Americans not to eat a lot of fat. At the same time, language in the DGA–and in my correspondence with the USDA/HHS–makes it sound like the fat limits aren’t their fault. They are just following (reinforcing, supporting, and promoting as policy) the limits set by the National Academies.

Which may explain why it did take some persistence on my part to get this info from the government. (If a PhD program has taught me nothing else, it has taught me persistence.)

In my first email, I asked what I thought was a pretty straightforward question:

In the 2015 DGA policy document, is there a recommended limit on the percentage of calories in the diet that should come from fat, and if so, what is it?

I got this response:

Thank you for your email. The 2015-2020 Dietary Guidelines for Americans recommends following a healthy eating pattern that accounts for all foods and beverages within an appropriate calorie level. Key Recommendations describe the components of a healthy eating pattern and highlight components to limit. Additionally, supporting text acknowledges that healthy eating patterns should be within the Acceptable Macronutrient Distribution Ranges (AMDRs) for protein, carbohydrates, and total fats. For example, page 35 of the PDF states that “healthy eating patterns can be flexible with respect to the intake of carbohydrate, protein, and fat within the context of the AMDR,” and Table A3-1, which outlines the Healthy U.S.-Style Eating Pattern, one example of a healthy eating pattern, states that “calories from protein, carbohydrate, and total fats should be within the AMDRs.”

In other words, wtf?

I tried again:

Thank you so much for your reply. But I’m not sure it answers my question in that the information that you point to seems to have been interpreted different ways.

The heading for the table which references the AMDR in the DGA document says this: “Daily Nutritional Goals for Age-Sex Groups Based on Dietary Reference Intakes and Dietary Guidelines Recommendations.” Beneath that it indicates that, except for children under the age of three, total dietary fat should be limited to no more than 35% of calories. This, to me, sounds like a limit on total fat calories as as part of the official policy document that is the DGA.

But, the parts of the text you reference that point to the AMDR seem to have been interpreted by others as the AMDR recommending one thing, while the DGA recommend something else.

Dr. Frank Hu has said about the 2015-2020 DGA that, “Another important positive change is the removal of an upper limit for total dietary fat …” Dr. Walter Willett has said, “The 2016 Dietary Guidelines are improved in some important ways, especially the removal of the restriction on percentage of calories from total fat …”

So, to put it quite simply, have the upper limits on percentage of calories from total fat been removed? Would it be possible to get an official “yes” or “no” answer to that question?

And this is what I got back:

Thank you for your email. The 2015-2020 DGAs recommends total fat intake within the AMDRs. As you know, the AMDRs are set by the Health and Medicine Division (formerly the Institute of Medicine), not through the Dietary Guidelines process.

An upper limit on total fat intake was not removed from the DGA.

The AMDR for total fats for adults is 20-35% of total kcal.

So, long story long: Have the “official” upper limits on total fat intake been lifted? No.

Do the officials in charge of the “official” limits want to come right out and say this? Um, well, uh … hmmm … perhaps maybe not in so many words.

And every 5 years. There are. So. Many. More. Words.

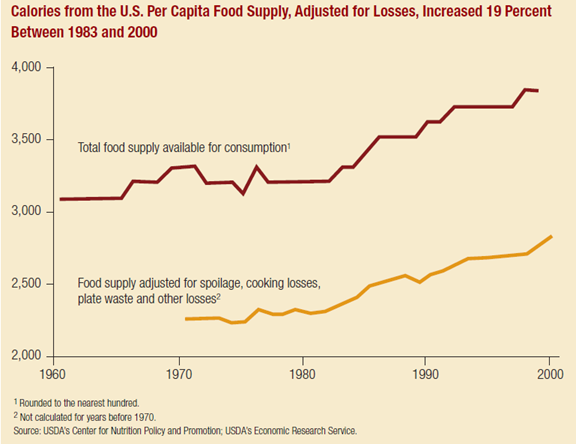

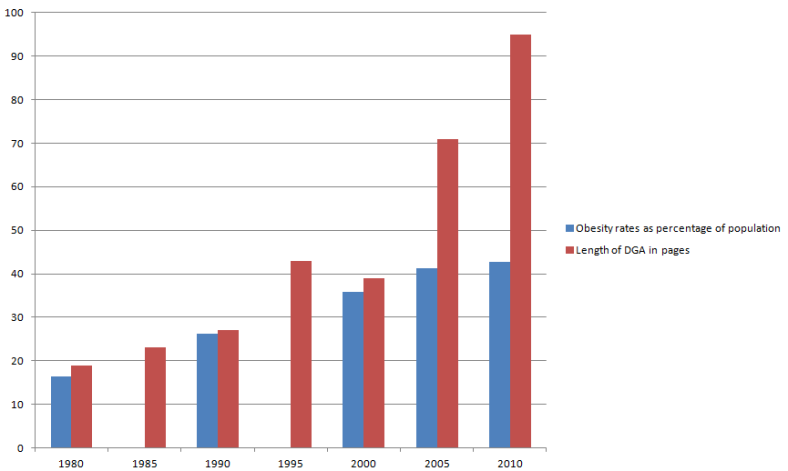

Speaking strictly in terms of association, we could say that the number of words in the Dietary Guidelines are directly and strongly related to increases in rates of obesity.

Maybe the problem isn’t the fat that is or is not in the diet. Maybe the problem is the words about fat that are–or are not, depending on your version of reality–in the guidelines.

And if you’ve made it this far and are interested in still more words on nutrition and rhetoric, I’ve had the pleasure of getting to chat with a number of different folks about my studies and where they are taking me. If you want to know what I’m reading, writing, and thinking about in that never-ending PhD program I’m in, here it is:

Diana Rogers at Sustainable Dish: We do a deep dive into social and political history behind the Dietary Guidelines, the price of meat in the 1970s, and how Diet for a Small Planet is not a low-fat cookbook. Tune in and you can hear me talk about the “dietary imaginary” and how we’ve lost the ability to think for ourselves about food.

Peter Defty at Optimal Fat Burning: We chat about the Dietary Guidelines, calories, and changing body size norms. Tune in and you can hear me talk about how a post-menopausal body can turn even the best diet into body fat and a bad attitude.

Laura and Kelsey at The Ancestral RDs: We talk about ethical problems associated with the Dietary Guidelines and how a feisty little start-up in Canada is working to make them irrelevant.

Speaking of that feisty little start-up in Canada, Approach Analytics, here’s some more about them and their work to “democratize the nutrition knowledge-making process.”

Please join me at AHS 2017, where–surprise–I’ll just keep on talking! I’m looking forward to discussing the ideas behind democratizing nutrition knowledge-making and more about how “n of 1” nutritional approaches, together with the power of population-based information, can sidestep an information-gathering system that serves to maintain the status of mainstream dietary guidance, but does little to help the public.

Finally, if you missed AHS 2016 and you haven’t heard me yammer on enough about the 2015 Dietary Guidelines, now’s your chance. Pull this up on YouTube and you’ll get see me do a couple of different versions of happy dance.